@inproceedings{MassaCVPR16,

author = "Francisco Massa and Bryan Russell and Mathieu Aubry",

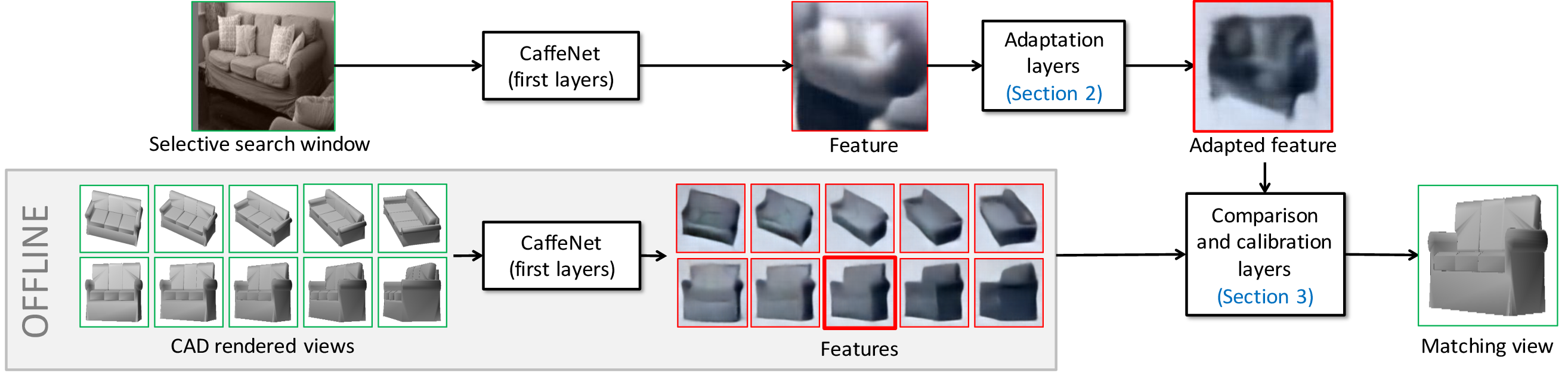

title = "Deep Exemplar 2D-3D Detection by Adapting from Real to Rendered Views",

booktitle = "Conference on Computer Vision and Pattern Recognition (CVPR)",

year = "2016"

}