Learning to Compare Image Patches via Convolutional Neural Networks |

Abstract |

|

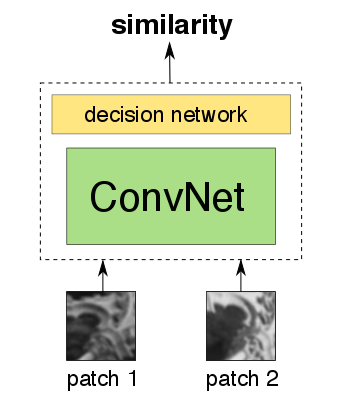

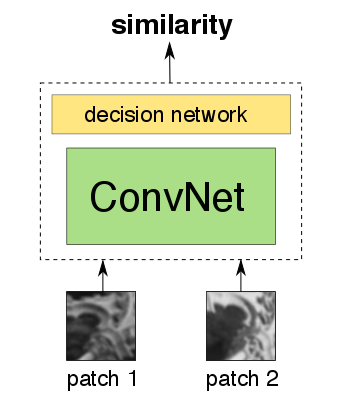

We show how to learn directly from image data (i.e., without resorting to manually-designed features) a general similarity function for comparing image patches, which is a task of fundamental importance for many computer vision problems. To encode such a function, we opt for a CNN-based model that is trained to account for a wide variety of changes in image appearance. To that end, we explore and study multiple neural network architectures, which are specifically adapted to this task. We show that such an approach can significantly outperform the state-of-the-art (June 2015) on several problems and benchmark datasets.

|

|

|

|

[paper]

[arxiv]

[extended abstract]

[supplementary material]

[poster]

|

Reference |

Learning to Compare Image Patches via Convolutional Neural Networks

Sergey Zagoruyko, Nikos Komodakis

In Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, June 2015

[bib]

@INPROCEEDINGS{ZagoruykoCVPR2015,

author = {Sergey Zagoruyko and Nikos Komodakis},

title = {Learning to Compare Image Patches via Convolutional Neural Networks},

booktitle = {Conference on Computer Vision and Pattern Recognition (CVPR)},

year = {2015}

}

|