1LIGM, École des Ponts, IP Paris, Univ Gustave Eiffel, CNRS

2Inria, École normale supérieure, CNRS, PSL

3Google DeepMind

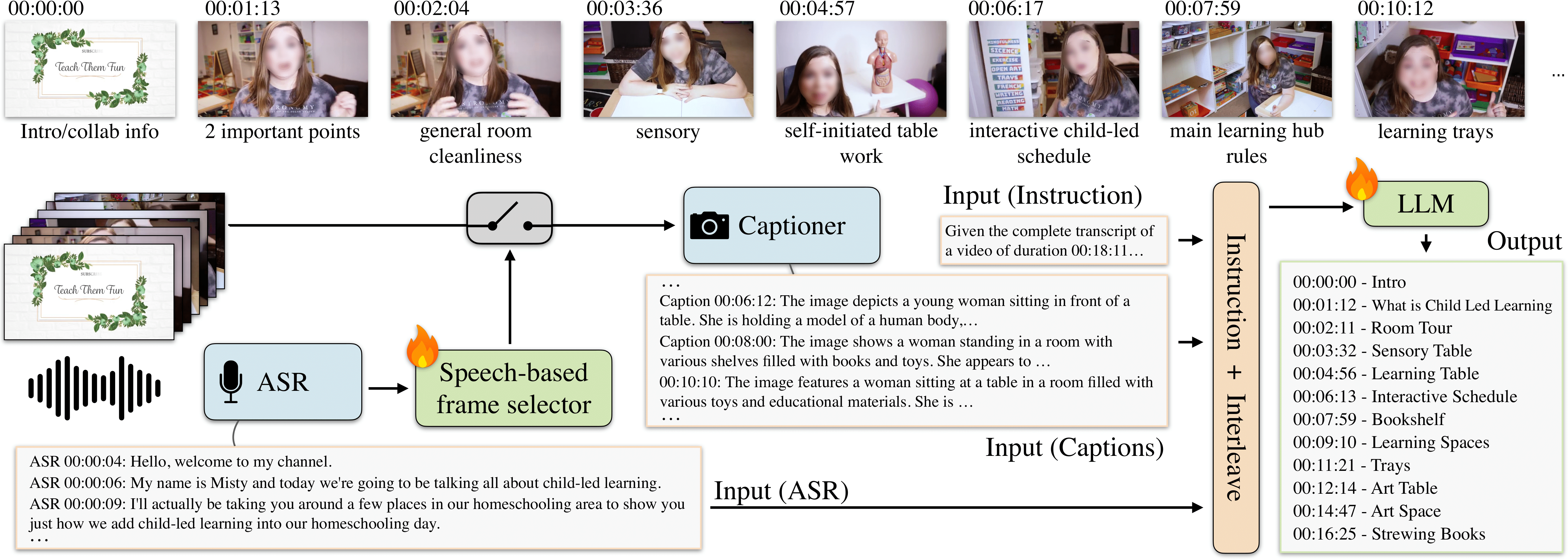

We address the task of video chaptering, i.e., partitioning a long video timeline into semantic units and generating corresponding chapter titles. While relatively underexplored, automatic chaptering has the potential to enable efficient navigation and content retrieval in long-form videos. In this paper, we achieve strong chaptering performance on hour-long videos by efficiently addressing the problem in the text domain with our 'Chapter-Llama' framework. Specifically, we leverage a pretrained large language model (LLM) with large context window, and feed as input (i) speech transcripts and (ii) captions describing video frames, along with their respective timestamps. Given the inefficiency of exhaustively captioning all frames, we propose a lightweight speech-guided frame selection strategy based on speech transcript content, and experimentally demonstrate remarkable advantages. We train the LLM to output timestamps for the chapter boundaries, as well as free-form chapter titles. This simple yet powerful approach scales to processing one-hour long videos in a single forward pass. Our results demonstrate substantial improvements (e.g., 45.3 vs 26.7 F1 score) over the state of the art on the recent VidChapters-7M benchmark. To promote further research, we release our code and models.

Our Chapter-Llama framework first selects video frames to process using speech information. Then we use a visual captioner to map the selected frames in the text space. We feed the resulting captions, along with speech transcripts, to the LLM which outputs the chapter boundaries and titles jointly as a single sequence of tokens.

@InProceedings{ventura25chapter,

title = {{Chapter-Llama}: Efficient Chaptering in Hour-Long Videos with {LLM}s},

author = {Lucas Ventura and Antoine Yang and Cordelia Schmid and

G{\"u}l Varol},

booktitle = {CVPR},

year = {2025}

}

This work was granted access to the HPC resources of IDRIS under the allocation 2024- AD011014696 made by GENCI. This work was funded in part by the ANR project CorVis ANR-21-CE23-0003-01, a research gift from Google, the French government under management of Agence Nationale de la Recherche as part of the "France 2030" program, reference ANR-23-IACL-0008 (PR[AI]RIE-PSAI projet), and the ANR project VideoPredict ANR-21-FAI1-0002- 01. Cordelia Schmid would like to acknowledge the support by the Körber European Science Prize. The authors would also like to thank Guillaume Astruc, Nikos Athanasiou, Hyolim Kang, and Nicolas Dufour for their feedback.